dirty_cat.MinHashEncoder¶

Usage examples at the bottom of this page.

- class dirty_cat.MinHashEncoder(n_components=30, ngram_range=(2, 4), hashing='fast', minmax_hash=False, handle_missing='zero_impute', n_jobs=None)[source]¶

Encode string categorical features by applying the MinHash method to n-gram decompositions of strings.

The principle is as follows:

A string is viewed as a succession of numbers (the ASCII or UTF8 representation of its elements).

The string is then decomposed into a set of n-grams, i.e. n-dimensional vectors of integers.

A hashing function is used to assign an integer to each n-gram. The minimum of the hashes over all n-grams is used in the encoding.

This process is repeated with N hashing functions to form N-dimensional encodings.

Maxhash encodings can be computed similarly by taking the maximum hash instead. With this procedure, strings that share many n-grams have a greater probability of having the same encoding value. These encodings thus capture morphological similarities between strings.

- Parameters:

- n_componentsint, default=30

The number of dimension of encoded strings. Numbers around 300 tend to lead to good prediction performance, but with more computational cost.

- ngram_range2-tuple of int, default=(2, 4)

The lower and upper boundaries of the range of n-values for different n-grams used in the string similarity. All values of n such that

min_n <= n <= max_nwill be used.- hashing{‘fast’, ‘murmur’}, default=’fast’

Hashing function. fast is faster than murmur but might have some concern with its entropy.

- minmax_hashbool, default=False

If True, returns the min and max hashes concatenated.

- handle_missing{‘error’, ‘zero_impute’}, default=’zero_impute’

Whether to raise an error or encode missing values (NaN) with vectors filled with zeros.

- n_jobsint, optional

The number of jobs to run in parallel. The hash computations for all unique elements are parallelized. None means 1 unless in a

joblib.parallel_backend. -1 means using all processors. See n_jobs for more details.

See also

dirty_cat.GapEncoderEncodes dirty categories (strings) by constructing latent topics with continuous encoding.

dirty_cat.SimilarityEncoderEncode string columns as a numeric array with n-gram string similarity.

dirty_cat.deduplicate()Deduplicate data by hierarchically clustering similar strings.

References

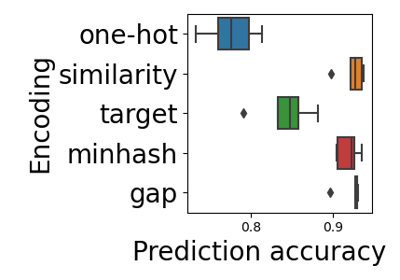

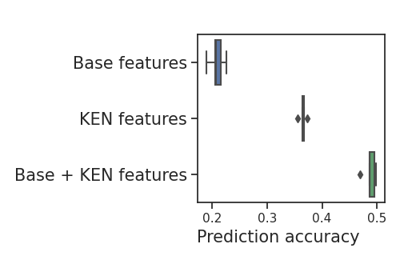

For a detailed description of the method, see Encoding high-cardinality string categorical variables by Cerda, Varoquaux (2019).

Examples

>>> enc = MinHashEncoder(n_components=5)

Let’s encode the following non-normalized data:

>>> X = [['paris, FR'], ['Paris'], ['London, UK'], ['London']]

>>> enc.fit(X) MinHashEncoder()

The encoded data with 5 components are:

>>> enc.transform(X) array([[-1.78337518e+09, -1.58827021e+09, -1.66359234e+09, -1.81988679e+09, -1.96259387e+09], [-8.48046971e+08, -1.76657887e+09, -1.55891205e+09, -1.48574446e+09, -1.68729890e+09], [-1.97582893e+09, -2.09500033e+09, -1.59652117e+09, -1.81759383e+09, -2.09569333e+09], [-1.97582893e+09, -2.09500033e+09, -1.53072052e+09, -1.45918266e+09, -1.58098831e+09]])

- Attributes:

- hash_dict_LRUDict

Computed hashes.

Methods

fit(X[, y])Fit the MinHashEncoder to X.

fit_transform(X[, y])Fit to data, then transform it.

get_params([deep])Get parameters for this estimator.

set_output(*[, transform])Set output container.

set_params(**params)Set the parameters of this estimator.

transform(X)Transform X using specified encoding scheme.

- fit(X, y=None)[source]¶

Fit the MinHashEncoder to X.

In practice, just initializes a dictionary to store encodings to speed up computation.

- Parameters:

- Xarray-like, shape (n_samples, ) or (n_samples, 1)

The string data to encode. Only here for compatibility.

- yNone

Unused, only here for compatibility.

- Returns:

MinHashEncoderThe fitted

MinHashEncoderinstance (self).

- fit_transform(X, y=None, **fit_params)[source]¶

Fit to data, then transform it.

Fits transformer to X and y with optional parameters fit_params and returns a transformed version of X.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Input samples.

- yarray-like of shape (n_samples,) or (n_samples, n_outputs), default=None

Target values (None for unsupervised transformations).

- **fit_paramsdict

Additional fit parameters.

- Returns:

- X_newndarray array of shape (n_samples, n_features_new)

Transformed array.

- get_params(deep=True)[source]¶

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- set_output(*, transform=None)[source]¶

Set output container.

See Introducing the set_output API for an example on how to use the API.

- Parameters:

- transform{“default”, “pandas”}, default=None

Configure output of transform and fit_transform.

“default”: Default output format of a transformer

“pandas”: DataFrame output

None: Transform configuration is unchanged

- Returns:

- selfestimator instance

Estimator instance.

- set_params(**params)[source]¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.